Designing a Decision System to Protect Attention

This experiment treats job search as a decision system rather than an effort problem. Instead of reacting to volume, it introduces a structured filter that reduces noise, protects attention, and improves decision quality. The system narrows a large set of signals into a small number of high-confidence opportunities. The result is lower cognitive load, clearer positioning, and more deliberate choices.

Most people treat a job search as an execution problem. Apply more. Try harder. Stay consistent. I did the same, and after a few weeks of scanning hundreds of roles, I found myself busy but no clearer on what to actually pursue.

The problem wasn't a lack of opportunity. It was signal quality and decision fatigue.

Scanning at volume creates noise. More roles mean more false positives, more emotional energy spent on options that were never really right, and less attention available for the ones that actually matter. Activity increases, but clarity doesn't. The real challenge isn't finding more to apply to. It's deciding where not to invest time.

So I approached it differently. Instead of asking which job to apply to, I asked a different question: what system would consistently surface strong signals and filter out weak ones before effort and emotion take over?

The hypothesis was simple. Better filtering early leads to fewer decisions later, and stronger applications.

The design principle

Before building the phases, two decisions shaped the whole system. First, hard constraints like salary, location, and contract type were deliberately delayed. Checking feasibility too early causes premature rejection of roles that might actually be the right move. Second, the early stages were built around signal and trajectory, not execution readiness. The goal was to build a clear picture of where things were pointing before deciding whether to act.

Phase 0: collect without judgment

The first phase was about building awareness and nothing more. Around 250 signals were collected from job alerts, inbound messages, and the network. No scoring, no filtering, no reactions. The whole point was to avoid drawing attention too early. Most people start reacting to roles the moment they see them. This phase separates collection from decision.

Phase 1: directional scoring

From 250 signals, each role was assessed across five dimensions: how well the role trajectory fits where I want to go, how strong the company signal is, what my personal energy response to the role is, the learning and leverage potential it offers, and how credible the match feels on both sides.

The important thing is that the scoring is directional, not precise. The goal isn't an accurate number. It's a consistent basis for comparison, so that 250 options become roughly 20 worth exploring further. That's about 8% moving forward.

Phase 2: the trajectory gate

At this stage, numbers give way to judgment. One question drives it: does this role involve meaningful execution, or does it drift into work I no longer want to do?

This is where most strategic-sounding roles get removed. Some appear senior but lack real ownership. Some are framed around innovation but don't involve the execution that makes innovation real. Some carry interesting titles but are fundamentally sales roles in different packaging. They all look credible at Phase 1. This phase catches what scoring misses. About 14 roles make it through, which is roughly 6% of the original set.

Phase 3: portfolio lens

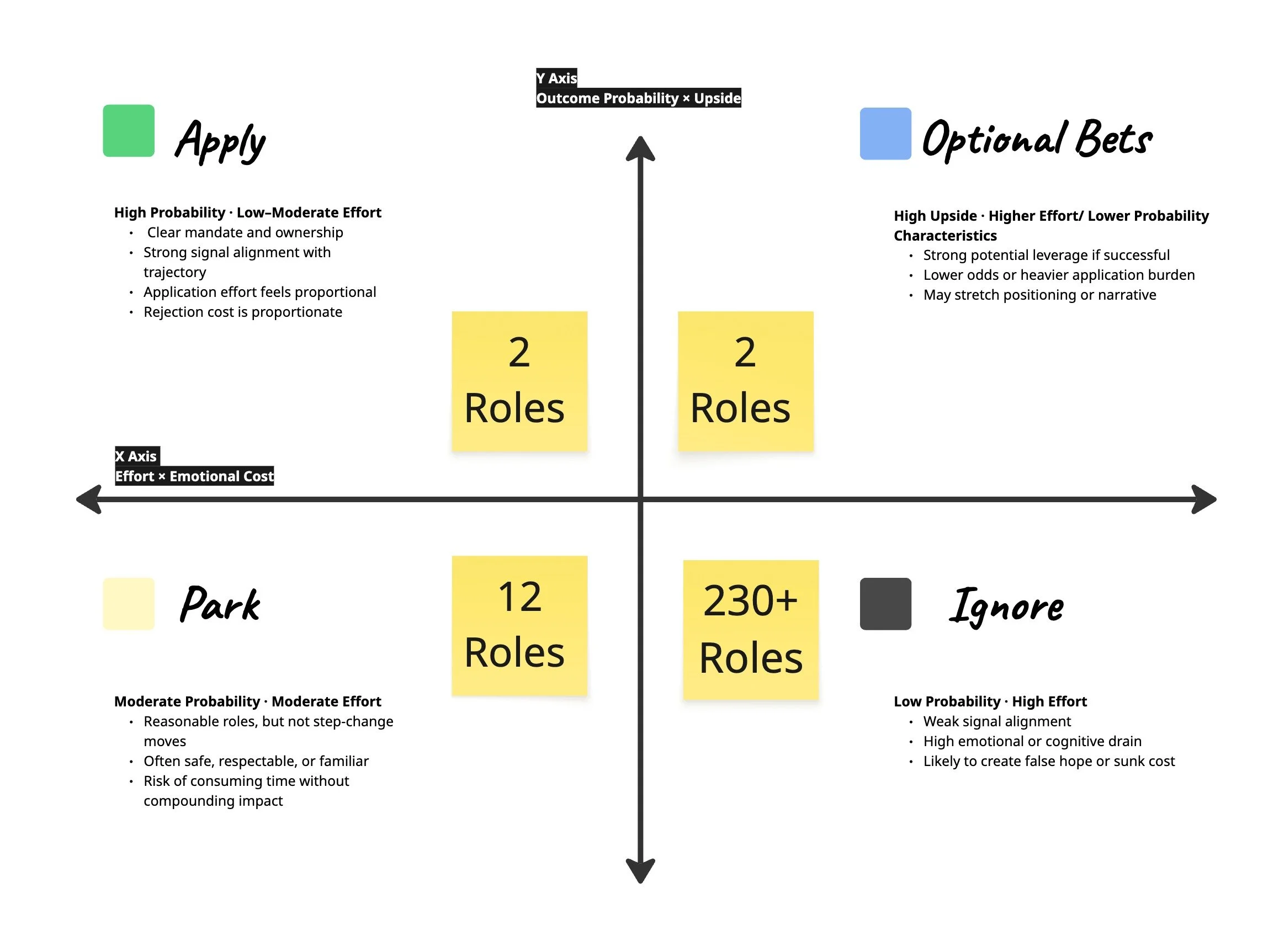

The remaining roles are evaluated not individually but as a portfolio, mapped across two axes: outcome probability multiplied by upside on one side, and effort multiplied by emotional cost on the other.

This produces four categories. Apply sits in the high-probability, lower-effort zone. These roles have clear mandates and ownership, strong alignment with trajectory, and proportionate application effort. Optional Bets sit in the high-upside zone with lower probability or higher effort. They might stretch the positioning or narrative, but the upside if they work is significant. Park holds the roles that are reasonable but not step-change moves. Often safe, familiar, or respectable, but at risk of consuming time without compounding impact. And Ignore is everything else: weak signal alignment, high emotional or cognitive drain, and likely to create false hope or sunk cost.

The most useful realisation from this step is that 230 of the original 250 roles end up in the Ignore category. Not because they are bad roles. Because applying to them would consume disproportionate attention for a disproportionate return. About 6 roles move forward.

Phase 4: reading deeply

Only the roles that survive Phase 3 get a full job description review, and the review is slow and deliberate, not a scan.

Reading carefully at this stage exposes things that aren't visible earlier: unclear ownership, organisational complexity, and a weak or undefined mandate. A role can pass every earlier filter and still reveal in the detail of its JD that the actual scope is narrow, or that the team structure would make real execution difficult. After this, 2 to 3 roles remain that feel genuinely strong.

What the system produced

Starting from 250 signals, the system narrowed to 20, then 14, then 6, then 2 to 3 strong applications. That's roughly 1 to 1.2% of the original set.

The difference is not volume. It is clarity. Cognitive load drops when the decision is already mostly made before you open the application. Decision confidence increases when you've done the filtering work rather than reacting role by role.

What I learned

The strongest signal in a job search isn't the title. It's where leverage actually sits. Not in abstract strategy. Not in surface-level innovation. But in execution with real ownership and a clear mandate.

That clarity didn't come from thinking harder about what I wanted. It came from building a system and running it.

This is shared early, not as a finished framework but as something tested in practice. The goal isn't to remove uncertainty from job search. It's to reduce the noise enough that the uncertainty that remains is worth sitting with.

Good systems don't eliminate doubt. They make it manageable.